NEWS_

StreetDrone News

Our latest developments

Airlines: the future of mobility operators

Guest post by Mark Preston

I was sitting on the tarmac on a British Airways flight returning from a weekend away in Lisbon. I was pondering a recent comment by a colleague who said that it wouldn’t be the traditional OEMs that will survive the coming mobility revolution. Look at previous attempts to move down the verticals into other areas as diverse as mapping, CAD systems, Kwikfit, and rental cars.

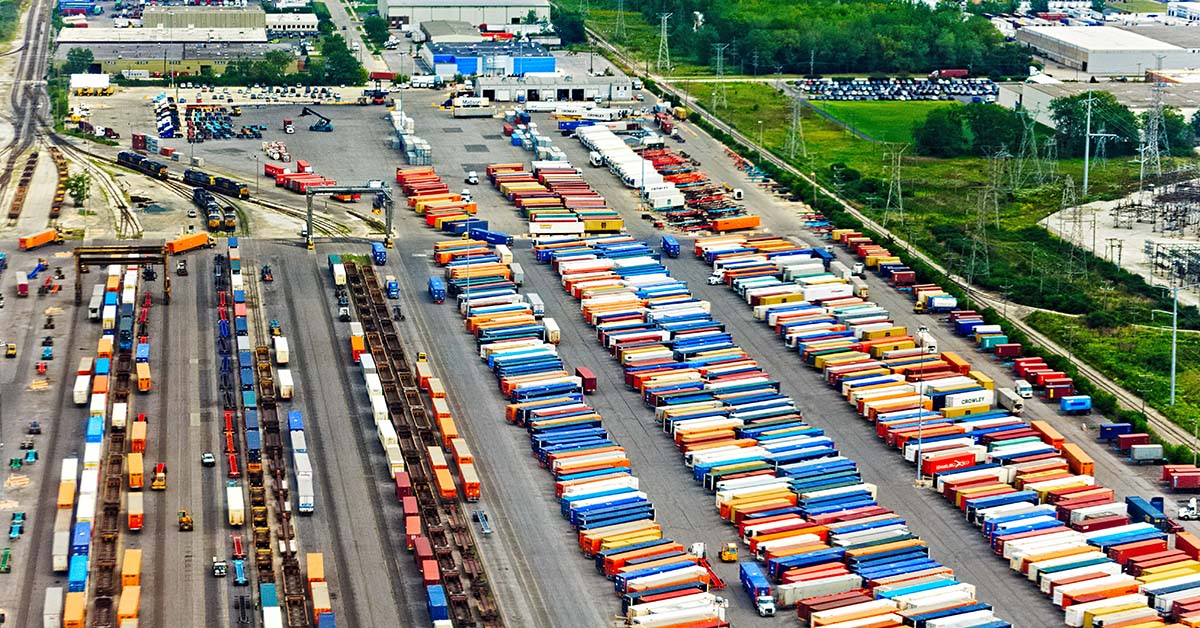

How autonomous vehicles are revolutionising slow, off-highway environments

Autonomous vehicles have the potential to revolutionise industrial environments, bringing newfound levels of efficiency and productivity. From construction sites to ports, autonomous trucks are dramatically improving operational and environmental efficiencies with faster delivery times and reduced costs in both fuel expenses and labour costs. As companies continue to embrace this technology, what can be expected from autonomous vehicle workflow performance?

How autonomous trucks are transforming the container shipping industry

For port managers looking for ways to reduce congestion and cut costs, autonomous trucks provide a low-cost, efficient solution for streamlining processes like container loading, moving, and unloading. In this blog post we’ll explore how these cutting-edge technologies are changing the way business is done in port facilities everywhere!

How to select the right automation technology for your distribution centre

Automation technologies can have a significant impact on business operations in terms of safety, efficiency and cost. It is important to understand the basics of automation technology, as well as analyse the key performance indicators that could be impacted when introducing automation into your distribution centre.

Benefits of using autonomous fleets in smart ports

Autonomous fleet technologies offer a range of potential benefits over traditional methods of port operation – from increased safety to improved cargo tracking – that are making them an ever-more attractive option for transportation operations managers looking to stay ahead in an ever-changing industry. In this blog post, we’ll explore the various advantages of using autonomous fleets within smart ports, delving into exactly how they could revolutionise your operations.

Overview of automation in distribution centres

Automation has revolutionised many industries, transforming the way businesses store, receive and distribute goods. The logistics sector is no exception; automation in distribution centres has enabled efficient warehouse operations that provide an unparalleled level of accuracy and consistency to businesses across various industries.

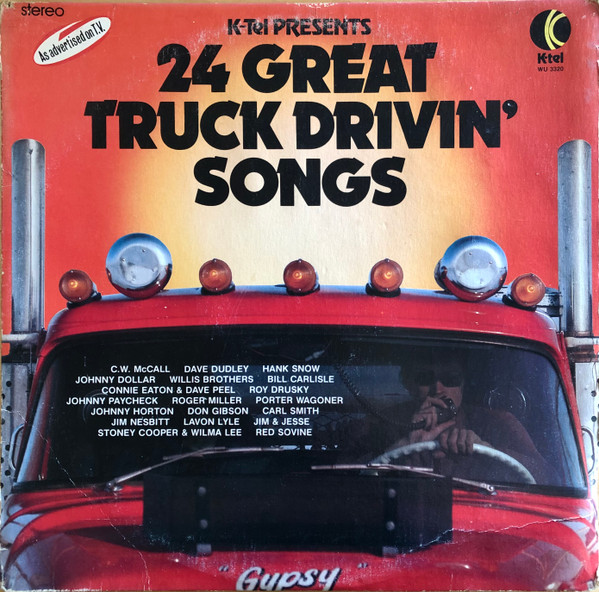

24 Great Truckin’ Songs

In 1976 I was 8 years old, living on a farm in Kiacatoo , outside Condobolin in central New South Wales, and… into trucks. For Christmas that year I was given my first LP: ‘24 Great Truck Drivin’ Songs’. It even had CB Trucker Talk code words on the back to decode the “secret” trucker talk, and this led me to build myself a side band radio to listen to the truck drivers in the vicinity! Read more…

Autonomously Reversing an Articulated Vehicle – A Case Study

In this case study we’ll explain the solution we’ve implemented to solve this particular issue of trucks reversing into tight loading bays in distribution centres and logistics sites and the results site managers can expect, by adopting these promising new advancements in automation technology and getting ahead of their competitors.

StreetDrone to take proven autonomous HGV logistics from proof-of-concept to commercial service

The V-CAL project has been awarded £4 million from the government’s £42 million Commercialising Connected and Automated Mobility (CAM) competition through the Centre for Connected and Autonomous Vehicles (CCAV).

Autonomous Logistics: A New Way to Streamline Your Supply Chain

Autonomous logistics offers businesses an innovative way to streamline their supply chains while reducing labour costs associated with traditional transportation methods. By utilising autonomous trucks, businesses can ensure their shipments arrive on time and free of damage due to poor reversing skills or human error. For business owners looking for ways to stay ahead of the competition in today’s constantly changing market landscape, investing in autonomous logistics may just be the key they need!

StreetDrone Tech Delivers Autonomously to Nissan Car Plant

On June 10th 2022 StreetDrone’s technology, built into a 40-tonne Terberg logistics vehicle, as part of the part-UK government funded 5G CAL (5G Connected Automated Logistics), delivered a fully-loaded trailer autonomously from an on-site parts warehouse via a live delivery route to the main Nissan car plant.

Smart Terminal 1 and Automation in Industrial Logistics

Industry 4.0 has seen many processes scale to high levels of automation from robotic production at one end of manufacturing to automated order, pick and pack at the other. With 69% of company boardrooms demanding better hyperautomation to improve quality and productivity while reducing cost and environmental impacts, the automation of industrial logistics is about to step into the light.